LiveView’s unique integration between server and client allows it to drastically optimize both latency and bandwidth, leading to user experiences that are faster and smoother than any other client-server combo out there. This weekend we have merged a pull request that goes one step further and makes client-side rendering between 3 to 30x faster.

The goal of this article is to document all LiveView optimizations we have designed and employed over the last 5 years.

As we will learn, most optimizations here come for free to LiveView developers and are only made possible thanks to the Erlang VM’s ability to hold millions of stateful WebSocket connections at once.

But first things first.

What is LiveView?

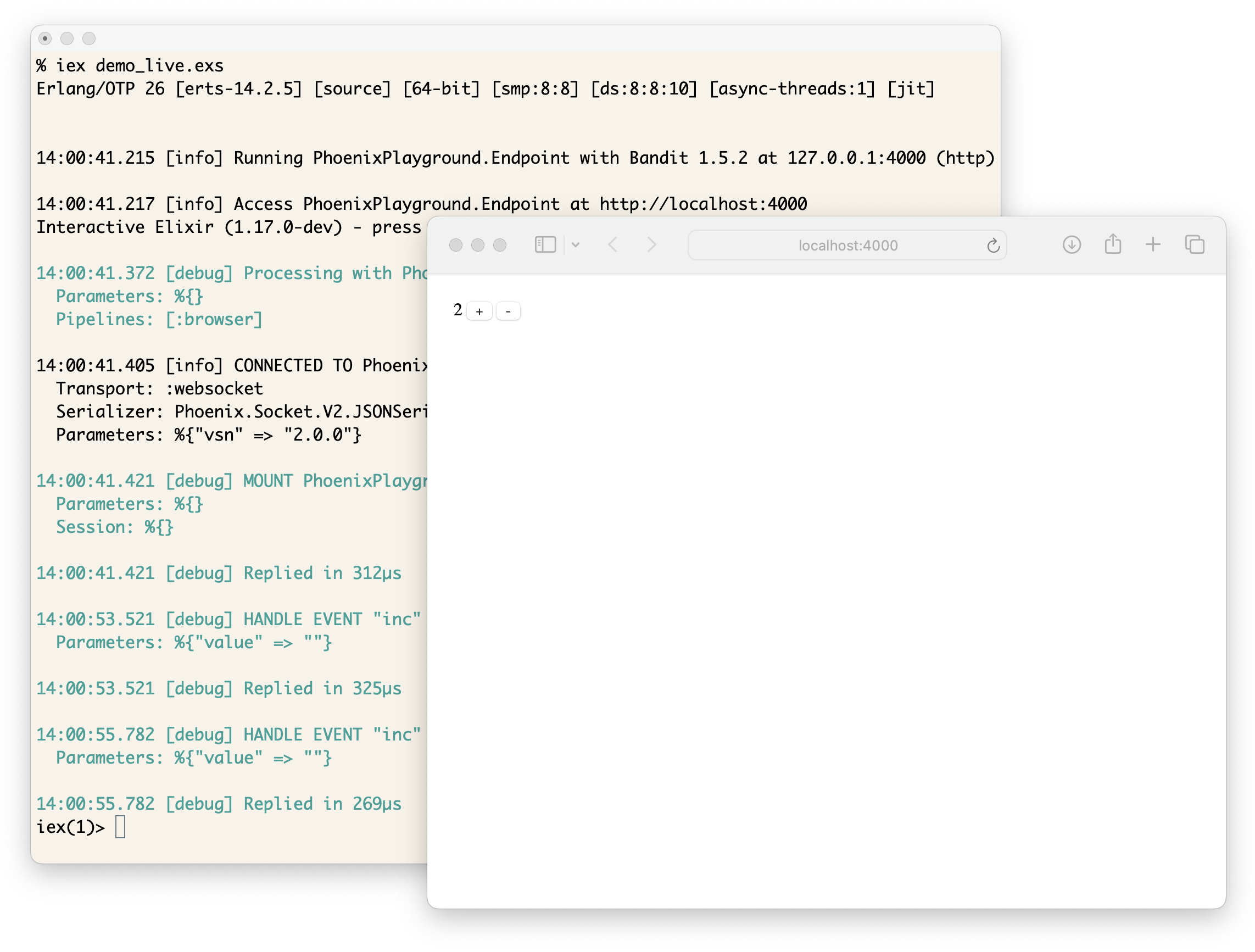

LiveView is a library for the Phoenix web framework that allows you to write rich, real-time user experiences with server-rendered HTML. Your LiveView code runs on the server and LiveView comes with a small JavaScript client that connects the two.

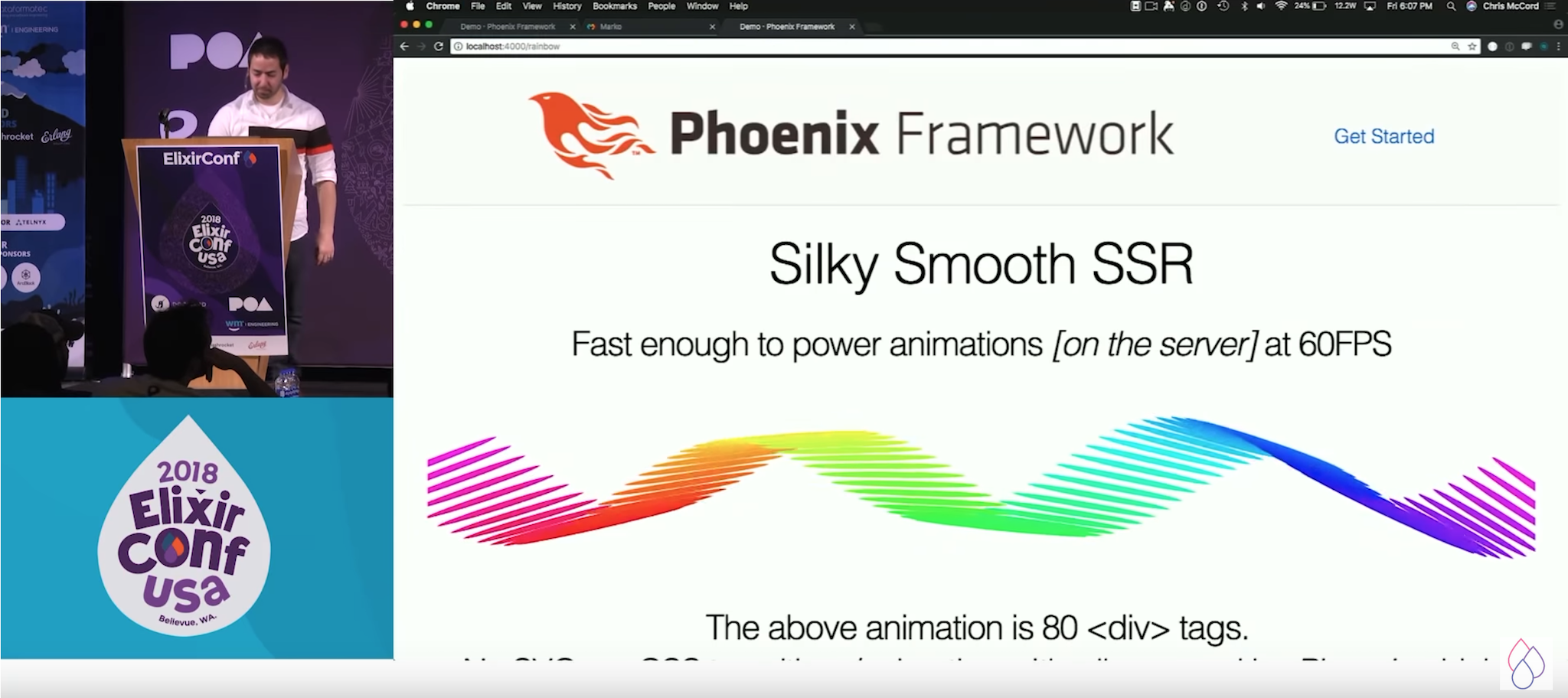

When LiveView was announced, one of the examples Chris McCord presented was the “rainbow” demo:

The idea is that we could animate a rainbow on a web page, by rendering divs with style attributes on the server and sending them to the client at 60 frames per second. To make rendering efficient on the client, Phoenix LiveView used (and still uses) the morphdom library. morphdom parses new HTML sent by the server and morphs the browser’s DOM accordingly. Prior to the conference, we tried the demo between Poland and East Coast, and it worked without jitters or stutters.

Immediately after the presentation, I remember talking to Chris and Dan McGuire that we could do better. If you looked at the rainbow demo, the template would roughly look like this:

<h1>Silky Smooth SSR</h1>

<p>Fast enough to power animations [on the server] at 60FPS</p>

<div>

<%= for bar <- @rainbows do %>

<div style="color: <%= bar.color %>; height: <%= bar.height %>px" />

<%= end %>

</div>

<p>The above animation is <%= @count %> <div> tags</p>

<p>...</p>

The LiveView demo would send the whole template on every frame and the browser would patch it onto the page. However, if we look at the template, we can see only parts of the template actually change! By sending the whole template over and over again, we are just wasting bandwidth.

While bandwidth was a concern, I was worried that the programming model would not scale: the larger the page, the more boilerplate the server will send on every single update. If you take a complex page with forms, widgets, etc, it is just not acceptable to send several KBs of data, every time the user presses a key inside an input, only to show an error message.

Unfortunately, this is still the programming model that many server-rendered applications implement: they send whole HTML chunks and use libraries like morphdom to update the page. While morphdom can handle those chunks just fine, the costs in latency and bandwidth can quickly become too steep, either leading to inferior user experiences or requiring the developer to spend countless hours fine-tuning their applications to acceptable metrics.

Let’s learn how LiveView addresses these concerns for us.

Optimization #1: splitting statics from dynamics

The first optimization we applied to LiveView is to split statics from dynamics. Let’s start with a smaller template and then we will revisit the rainbow demo. Take this template:

<p>counter: <%= @counter %></p>

We can see from this template that, <p>counter: and </p> are static. They do not have interpolated content and therefore they won’t ever change. @counter is the dynamic bit. Can we somehow leverage this?

Historically, Phoenix used .eex templates, which stands for “Embedded Elixir”, to render pages. In a very simplistic way, you could think that the compiler for .eex templates would convert the template above to something like this:

Enum.join(["<p>counter: ", @counter, "</p>"], "")

Once you render the template, you execute the code above, and you get a string back (actually, we don’t build a string but an IO list, which provides many other performance and memory benefits).

To address the problems above, we introduced .leex templates, which stand for “Live Embedded Elixir”. The idea is that we would compile the template above to this:

%Phoenix.LiveView.Rendered{

static: ["<p>counter: ", "</p>"],

dynamic: [@counter]

}

In other words, we build a rich data-structure that splits the statics and dynamics from the template. Now, when you render a page with LiveView, we convert that rendered structured into JSON. Assuming the value of @counter is 13, we would get:

{

"s": ["<p>counter: ", "</p>"],

"0": "13"

}

The client will store this data and render it. The data structure is built in a way that guarantees that length(statics) == length(dynamics) + 1. This way, for the client to stitch the actual HTML back together, all you need to do is to intersperse the dynamics, given by numeric indexes, within the statics.

Now comes the important part: when we bump the value of @counter to 14, we don’t need to send the statics again. The next JSON we send will be simply this:

{

"0": "14"

}

The client will merge the new dynamics above into its existing set of statics, resulting in the following:

{

"s": ["<p>counter: ", "</p>"],

"0": "14"

}

And to render, once again, we intersperse statics and dynamics, rebuilding the HTML structure, and update the page with morphdom!

At this point, it is worth noting .leex templates were not aware of the HTML structure. A template like this:

<p class="<%= @class %>">counter: <%= @counter %></p>

would compile to:

%Phoenix.LiveView.Rendered{

static: ["<p class=\", "\">counter: ", "</p>"],

dynamic: [@class, @counter]

}

This was an explicit design choice. We have experimented with returning a Virtual DOM from the server, but that actually increased the bandwidth usage, because a richer data structure led to bigger and more complex payloads. Representing templates as flat lists which are assembled on the client was the perfect spot.

While this provides a good enough starting point, it would be hardly useful in practice, let’s learn why.

Optimization #2: rendering trees and fingerprints

In practice, templates have complex logic in them, such as conditionals, function calls, and so on. Let’s make our template slightly more complex:

<%= if @counter == 0 do %>

<p>Nobody clicked the button yet.</p>

<% else %>

<p>counter: <%= @counter %></p>

<% end %>

<%= render_button(@counter) %>

If we convert the template above to our rendered structure, this is what we would get:

%Phoenix.LiveView.Rendered{

static: ["", "\n\n\n", ""],

dynamic: [

if(@counter == 0, do: ..., else: ...),

render_button(@counter)

]

}

As you can see, pretty much all content is dynamically generated! To solve this, we must build a tree of Rendered structures. In particular, we want to:

-

compile conditionals such that both

do and else branches also return rendered structures

-

promote functions, such as

render_button(@counter), to uses templates and return rendered structures, instead of strings

What we want to have in practice, is this:

%Phoenix.LiveView.Rendered{

static: ["", "\n\n\n", ""],

dynamic: [

if(@counter == 0) do

%Rendered{

static: ["<p>Nobody clicked the button yet.</p>"],

dynamic: []

}

else

%Rendered{

static: ["<p>counter: ", "</p>"],

dynamic: [@counter]

}

end,

render_button(@counter) #=> returns %Rendered{}

]

}

Now, when we first render the page, assuming counter is 0, this is what we get:

{

"s": ["", "\n\n\n", ""],

"0": {

"s": ["<p>Nobody clicked the button yet.</p>"]

},

"1": {

"s": ["<button phx-click=\"bump\">Click me!</button>"],

}

}

Rendering this page uses the same process as before, except it is now recursive. We start at the root, interpersing statics and dynamics. If any of the dynamics is also a JavaScript object, we apply the same rendering, and so on.

Now, if we bump the counter to 12, you could assume we should send this back:

{

"0": {"0": "12"},

"1": {}

}

As we changed rendering to be recursive, we need to also change the merging to be recursive. If we merge the above, we will get this:

{

"s": ["", "\n\n\n", ""],

"0": {

"s": ["<p>Nobody clicked the button yet.</p>"],

"0": "12"

},

"1": {

"s": ["<button phx-click=\"bump\">Click me!</button>"]

}

}

However, the above has an error in it. Can you spot it?

The representation of the conditional is mixed: it uses the statics from when @counter == 0 with the dynamics of the else branch ("0" => "13"). Effectively, there is no place to intersperse the new counter value because we have the old statics.

This is a quite tricky issue: because a dynamic expression, such as a conditional, may fully change the rendered template at any time, the rendered structure on the server may no longer match the structure on the client!

This is not only an issue with conditionals inside templates. The render_button(@counter) can also use runtime behaviour to change its template. Imagine you have a really sassy button:

def render_button(@counter) do

case rem(@counter, 3) do

0 -> ~H"<button phx-click=\"bump\">Click me!</button>"

1 -> ~H"<button phx-click=\"bump\">I dare you to click me!</button>"

2 -> ~H"<button phx-click=\"bump\">Please don't click me!</button>"

end

end

All of the templates above have different statics and, failing to send the updated statics to the page will effectively render the wrong thing.

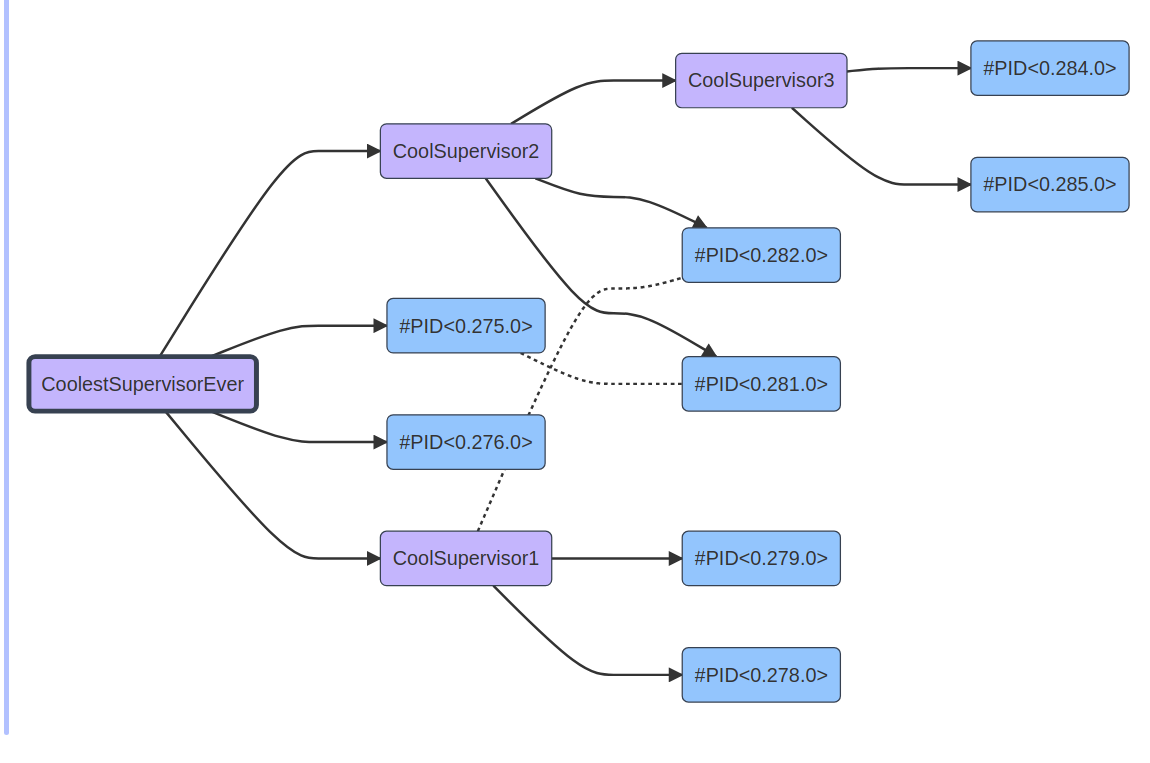

We can address this by adding template fingerprinting. Each %Rendered{} structure has a fingerprint, computed at compile-time, which is a 64bit integer representing the MD5 of the template statics and dynamics.

Now, when the server first renders a template, the server stores the fingerprint of the whole rendering tree. For example, when we first render the template, the server will keep this:

{123, #=> this is the fingerprint of the root

%{0 =>

{456, %{}}, #=> this is the fingerprint of the if in the conditional

1 =>

{789, %{}}, #=> this is the fingerprint of one of the buttons

}}

The fingerprint tree is a tree of two-element tuples, the first element being the fingerprint, the second is a map with indices of nested rendered structures inside the dynamics.

Now, when there is an update on the page, we compare the %Rendered{} structure with our fingerprint tree, if a fingerprint changes, it means that subtree has changed, and we must send both static and dynamic to the client! With these changes in place, once @counter goes from 0 to 12, we will actually send this:

{

"0": {

"s": ["<p>counter: ", "</p>"],

"0": "12"

},

"1": {}

}

The fingerprint from the conditional code changed, so we send the new statics. The button fingerprint is the same, so nothing new there.

It is worth noting this is only possible because LiveView uses stateful WebSocket connections. This means LiveView can keep the fingerprint tree per WebSocket connection in memory, which is a very lightweight representation of the template the client currently has, and know exactly when the client needs a new template or not.

Without stateful connections (or without an efficient implementation of them), you must always render the statics, defeating the purpose of the optimization. Another option is to send the fingerprints to the client, and let the client request any fingerprint it is missing, however this adds latency as rendering updates may require multiple round-trips. None of those options were acceptable to us.

This is the foundation of our optimization work. Let’s keep on moving.

Optimization #3: change tracking

When we split statics from dynamics, the main insight is that there are parts of templates that never change. We can also extend this insight to the dynamic parts themselves!

Imagine you are building a Twitter clone in 15 minutes with LiveView. To render a tweet, you would most likely have this template:

<div class="tweet-author">

by <%= @author %>

</div>

<div class="tweet-body">

<%= @body %>

</div>

<div class="tweet-bottom">

Replies: <%= @replies_count %>

Retweets: <%= @retweets_count %>

Likes: <%= @likes_count %>

</div>

A highly engaging tweet would quickly rack up several replies, retweets, and likes. However, if we want to update these counters as they arrive, every reply, retweet, or like would require us to send this JSON (note it is already without the statics):

{

"0": "John Doe",

"1": "Whole body of the tweet...",

"2": "243",

"3": "1.5k",

"4": "2.3k"

}

We would send the tweet body, the username, over and over again, while they rarely change. The more content, the more duplication. While tweets are typically short, we may broadcast this thousands of times to thousands of connected users, quickly multiplying the costs.

Given LiveView is stateful, we can also track exactly when each of the assigns (i.e. @body, @replies_count, etc) change. In your LiveView, you would most likely have this code:

def handle_info(:new_reply, socket) do

{:noreply, update(socket, :replies_count, fn count -> count + 1 end)}

end

Once the socket is updated, we need to render a new page. However, we know the only data that changed was replies_count. We use this information in our templates by slightly changing how we compile them. Broadly, we transform the tweet template to something akin to:

<div class="tweet-author">

by <%= if changed[:author], do: @author %>

</div>

<div class="tweet-body">

<%= if changed[:body], do: @body %>

</div>

<div class="tweet-bottom">

Replies: <%= if changed[:replies_count], do: @replies_count %>

Retweets: <%= if changed[:retweets_count], do: @retweets_count %>

Likes: <%= if changed[:likes_count], do: @likes_count %>

</div>

If only the replies count change, here is what we send to the browser:

{

"2": "244"

}

Now we run the same merging algorithm on the client (no changes required), build the new HTML, and render it again with morphdom.

As you can see, by tracking how @assigns are used in your templates and change overtime, LiveView automatically derives minimal data to be sent. This tracking is made trivial thanks to Elixir’s immutable data structures. With first-class immutability, it is not possible to change any of the tweet data behind the scenes. Instead, you must explicitly update data through the socket API, which allows LiveView to precisely track how data changes over time.

Achieving such tiny payloads in other stacks often require writing specialized code and/or carefully synchronizing data between client and server. With Phoenix LiveView, you get those for free!

Optimization #4: for-comprehensions

If you have been keeping track, our dynamics may have two distinct values so far:

-

a string, representing the value to inject into the template

-

another rendered structure, which is traversed recursively

We have one more trick up our sleeve. In the rainbow example, we had to render 80 <div>s to power our animation. This was done with a for-comprehension:

<%= for bar <- @rainbows do %>

<div style="color: <%= bar.color %>; height: <%= bar.height %>px" />

<%= end %>

You could imagine that, if we have three bars, we would send this JSON to the client:

[

{

"s": ["<div style=\"color: \"", "; height: ", "px\" />"],

"0": "blue",

"1": "60"

},

{

"s": ["<div style=\"color: \"", "; height: ", "px\" />"],

"0": "orange",

"1": "50"

},

{

"s": ["<div style=\"color: \"", "; height: ", "px\" />"],

"0": "red",

"1": "40"

}

]

However, doing so would be quite silly! It is obvious that everything inside a comprehension will have the exact same statics. We optimized this by compiling for-comprehensions into a new struct called Phoenix.LiveView.Comprehension. In a nutshell, the template above is compiled to:

%Phoenix.LiveView.Comprehension{

static: ["<div style=\"color: \"", "; height: ", "px\" />"],

dynamics: [

for bar <- @rainbows do

[bar.color, bar.height]

end

],

fingerprint: 798321321

}

And our JSON becomes this:

{

"s": ["<div style=\"color: \"", "; height: ", "px\" />"],

"d": [

{"0": "blue", "1": "60"},

{"0": "orange", "1": "50"},

{"0": "red", "1": "40"}

]

}

We introduced a new key, “d”, which the client must now detect. It is an indicator that we have a comprehension. Rendering comprehensions is quite trivial: for each entry in the “d” key, we intersperse its indexes with the static structure, and render each of them as we’d render a regular Rendered structure.

One curious aspect is that this optimization also applies when navigating across distinct LiveViews. For example, imagine you are on a LiveView page which shows a single tweet. When you navigate to the main timeline with dozens of tweets, if both are LiveViews, it performs a live navigation. The live navigation reuses the existing WebSocket connection and does not require a new HTTP request, no need to redo authentication, etc. Instead, live navigation starts a new LiveView, computes its new rendered tree, and sends its JSON representation. If the tweet timeline uses comprehensions, instead of repeating the markup of every tweet, we only send the compact representation seen above!

In other words, even if you are using LiveView to mostly navigate across pages, without any of its dynamic features, your users will still benefit from a faster user experience. Of course, for the particular optimization of comprehensions, page compression algorithms would also give really good results. However, with LiveView, we apply these optimizations reliably when compiling your code, instead of spending additional CPU cycles at runtime.

Intermission: seeing it altogether

Now is a good time to revisit our rainbow example! Here is what our initial template looked like:

<h1>Silky Smooth SSR</h1>

<p>Fast enough to power animations [on the server] at 60FPS</p>

<div>

<%= for bar <- @rainbows do %>

<div style="color: <%= bar.color %>; height: <%= bar.height %>px" />

<%= end %>

</div>

<p>The above animation is <%= @count %> <div> tags</p>

<p>...</p>

On every frame, 60 frames per second, without any of the optimizations we discussed, we would send this to the client:

<h1>Silky Smooth SSR</h1>

<p>Fast enough to power animations [on the server] at 60FPS</p>

<div>

<div style="color: blue; height: 40px" />

<div style="color: blue; height: 45px" />

<!-- 76 similar lines -->

<div style="color: red; height: 60px" />

<div style="color: red; height: 65px" />

</div>

<p>The above animation is 80 <div> tags</p>

<p>...</p>

As you can imagine, this is a lot of content, even for a relatively small example. With our optimizations, here is what we emit on every frame instead:

{

"0": {

"d": [

{"0": "blue", "1": "40"},

{"0": "blue", "1": "45"},

# 76 similar lines

{"0": "red", "1": "60"},

{"0": "red", "1": "65"},

]

}

}

At the end of the day, LiveView does not send “HTML over the wire”, it sends “diffs over the wire”, and it is easy to see how this can send less data by orders of magnitude on complex pages.

All optimizations I have described so far were actually part of the initial .leex templates (Live Embedded Elixir) implementation, introduced back in December 2018, roughly 3 months after LiveView announcement.

We have a few more to go through.

Optimization #5: LiveComponents

As LiveView usage grew, developers felt the need for better abstractions to compartmentalize markup, state, and events. So LiveComponents were born.

Soon after, it became clear to us that LiveComponents opened up the way for new and interesting optimizations. The way a LiveComponent works is that you define a separate module, with its own state and code:

defmodule TweetComponent do

use Phoenix.LiveComponent

def render(assigns) do

~H"""

<div class="tweet">

<div class="tweet-author">

by <%= @tweet.author %>

</div>

...

</div>

"""

end

end

Once defined, you render them like this:

Here is a tweet: <.live_component module={TweetComponent} id={tweet.id} tweet={tweet} />

And here is what is sent over the wire:

{

"c": {

"1": {

"s": ["<div class=\"tweet\">\n <div class...", ...],

"0": "John Doe",

...

}

},

"s": ["Here is your tweet: ", ""],

"0": 1

}

Instead of nesting the component inside the rendering tree, we give a unique ID (which we call CID) to each rendered component and we return the component under a special key called “c”. In this case, the CID of our rendered tweet is 1.

Now, wherever we are meant to inject the contents of the LiveComponent, we will see an integer representing its CID. For example, the before-last line of the JSON has "0": 1. This means the dynamic at index 0 must render the component with CID=1 in its place.

By placing LiveComponents outside of the rendering tree, we gain many new properties.

So far, whenever anything changed on the page, we would merge the diffs, build the whole HTML of the page, and send it to morphdom to parse and patch it. With LiveComponents, if only LiveComponents change, instead of patching the whole page, we locate the LiveComponents on the page and update them directly. Furthermore, when patching the whole page, if we find a LiveComponent and it did not change, we tell morphdom to skip it.

In order to do so, we need to able to efficiently locate LiveComponents on the page. We had different implementations of this mechanism over the last years, so I will describe the last iteration, which is simpler and more robust.

At the beginning, LiveViews rendered regular .eex (Embedded Elixir) templates. Then we wanted to separate static from dynamic and perform changing tracking, so we introduce .leex (Live Embedded Elixir). However, it quickly became clear .eex nor .leex were not expressive enough for writing rich HTML templates: all they do is text substitution. Meanwhile, users of JavaScript frameworks were enjoying the benefits of more expressive templating languages with custom components, slots, and more.

Not only that, because LiveView relies on morphdom, if you have an invalid template (for example you forgot to close a tag), the browser would attempt to render the template anyway, which mixed with morphdom‘s patching, would change the page in ways that made it often hard to find the simplest of bugs.

To address all of the needs above, Marlus Saraiva contributed .heex templates (HTML + EEx) to LiveView. It is EEx with semantic understanding of HTML. With HEEx, we enforce LiveComponents have a single root tag, as seen in our TweetComponent above. And then, when rendering the LiveComponent in browser, we automatically annotate its root tag with a data-phx-cid attribute:

<div data-phx-cid="1" class="tweet">

<div class="tweet-author">

by John Doe

</div>

...

</div>

Now finding, patching, or skipping updates on LiveComponents is extremely easy!

Before moving on to our next optimization, there is another cool property of components. For example, imagine you have this page:

<h1>Timeline</h1>

<%= for tweet <- @tweets do %>

<.live_component module={TweetComponent} id={tweet.id} tweet={tweet} />

<% end %>

If you are listing 5 tweets on a page, the data over the wire will be this:

{

"c": {

"1": {...},

"2": {...},

"3": {...},

"4": {...},

"5": {...}

},

"s": ["<h1>Timeline</h1>\n\n", ""],

"0": {

"s": ["", ""],

"d": [[1], [2], [3], [4], [5]]

}

}

In other words, we render 5 entries inside a comprehension. Each of these entries points to their CID, which we can find under the “c” key. Now, imagine you also have a button that allows you to sort the timeline, in this case, reversing the order of the tweets. Can you guess which diff will be sent over the wire?

Here it is:

{

"0": {"d": [[5], [4], [3], [2], [1]]}

}

And, when applying the patch, LiveView knows those components did not change, so it will simply move them around the page, without reparsing their HTML or recreating DOM elements!

The fact LiveView will automatically build this tiny payload, without requiring any additional instructions from developers - besides well-organizing their code with LiveComponent - is mind-blowingly awesome. And, if you need fine-grained precision over this, you can always use streams with explicit insert/delete operations, which we won’t cover today.

Optimization #6: Tree-sharing in LiveComponents

There is another optimization specific to LiveComponents worth discussing. In the previous section, we rendered 5 tweets, each as a LiveComponent.

When we first introduced LiveComponents, here is how they looked like:

{

"c": {

"1": {

"s": ["<div class=\"tweet\">\n <div class...", ...],

"0": "John Doe",

...

},

"2": {

"s": ["<div class=\"tweet\">\n <div class...", ...],

"0": "Jane Doe",

...

},

"3": {

"s": ["<div class=\"tweet\">\n <div class...", ...],

"0": "Joe Armstrong",

...

},

...

}

}

As you can see, we are sending the same statics over and over again! We solved a similar problem when optimizing comprehensions and, this time around, we can do something even better.

Given we keep fingerprint trees on the server, when we render a LiveComponent, we check if we have already rendered another component with the same name, such as TweetComponent. If yes, and the fingerprint of the component we are currently rendering matches the fingerprint of the one previously rendered, then we annotate the component to reuse the statics.

This is done by setting the "s" key of the JSON to an integer. However, there is a trick: we first attempt to find a matching fingerprint of a component that we already sent to the client. If there is one, we avoid sending the statics altogether by setting the "s" key to -CID. Otherwise, we set the key to the CID of a component that is being sent in the same JSON response.

Overall, on the first render with five tweets, we would get this:

{

"c": {

"1": {

"s": ["<div class=\"tweet\">\n <div class...", ...],

"0": "John Doe",

...

},

"2": {"s": 1, "0": "Jane Doe", ...},

"3": {"s": 1, "0": "Joe Armstrong", ...},

...

}

}

Now, whenever the “s” key is an integer, the client must copy the statics of the matching component.

If you later push another tweet to the client, we skip sending the statics altogether, since we know the client already has them. The payload would look like this:

{

"c": {

"6": {"s": -1, "0": "Jane Doe", ...}

}

}

You may wonder why the positive/negative CID. Because a component may be updated at any time, include its rendering tree, we could have a payload like this:

{

"c": {

"1": {

"s": ["<div class=\"mega-tweet\">\n <div class...", ...],

"0": "John Doe"

},

"6": {"s": -1, "0": "Jane Doe", ...}

}

}

As you can see, the component with CID=1 is updating the statics on the page. Therefore, which statics should we use for CID=6? The sign of the integer tells us if we should use the old (negative) or new (positive) version. This is also why, since the beginning of the article, we started counting CIDs from 1. The more you know!

Finally, as the title of this optimization says, we are not only sharing the immediate statics of the component, but the whole component tree.

Optimization #7: Change-tracking revisited

After a trip down the memory lane, we are finally ready to discuss the optimization we recently added to LiveView. This optimization uses several techniques previously discussed but, unlike them, it benefits the client exclusively. The initial idea for this optimization came to life after watching one of Fireship videos on client-side frameworks (unfortunately I can no longer recall which one).

We know the JSON we send to the client is a tree of rendered structures. When we talked about nesting, we showed this example:

<%= if @counter == 0 do %>

<p>Nobody clicked the button yet.</p>

<% else %>

<p>counter: <%= @counter %></p>

<% end %>

<%= render_button(@counter) %>

In an actual page, we may have several conditionals, each branch with their rendered structs. Each function call or each component that we call in the template, may have their own subtrees too. We also know that, if part of the template does not change, the server won’t send an update for it.

Let’s slightly change the template above to show this in practice:

<p>Hello, <%= @username %></p>

<%= if @counter == 0 do %>

<p>Nobody clicked the button yet.</p>

<% else %>

<p>counter: <%= @counter %></p>

<% end %>

The first time we render it, assuming @counter is 13 and @username is "John Doe", we will get this:

{

"s": ["<p>Hello, ", "</p>\n\n", ""],

"0": "John Doe",

"1": {

"s": ["<p>counter: ", "</p>"],

"0": "13"

}

}

Now, if only @username changes, this is the diff we get:

{

"0": "Jane Doe"

}

In other words, by not sending "1": ..., the server is telling us that a whole subtree did not change. If the subtree did not change, could we perhaps avoid building all of its HTML and stop asking morphdom to parse and morph something that, we know for certain, stays the same?

However, we cannot simply remove the element from the HTML. We still need to track its position in the overall page. Effectively, what we need to do, is to find a way to uniquely identify the subtree and render only its root tag.

Wait a second, this sounds suspiciously close to what we did LiveComponents?

If the server can tell us which rendered structure has a single root tag (which the server knows, thanks to HEEx templates), then we can use this information to annotate DOM elements with unique IDs. And if the elements represented by unique IDs did not change, we can tell morphdom to skip them.

Alright, let’s see how this is done in practice. When we first render the page above, once again assuming @counter is 13 and @username is "John Doe", this is the JSON we get:

{

"s": ["<p>Hello, ", "</p>\n\n", ""],

"0": "John Doe",

"1": {

"r": 1,

"s": ["<p>counter: ", "</p>"],

"0": "13"

}

}

The only difference is a new "r": 1 annotation, which informs us that the subtree is wrapped by a single root element. Given this is the initial render, we can build its HTML directly, without morphdom:

<p>Hello, John Doe</p>

<p data-phx-magic-id="1">counter: 13</p>

Due to the root annotation, we slightly modified how the root tag is rendered, giving it a data-phx-magic-id. Each new root tag gets a new auto-incrementing “magic-id”.

Now, when the username updates, since the subtree did not change, here is what we will give to morphdom:

<p>Hello, John Doe</p>

<p data-phx-magic-id="1" data-phx-skip></p>

We only render the root tag, without any of its contents, nor any of its other attributes. We then instruct morphdom to, when it finds an element with a matching “magic-id”, it should ignore the update and keep the previous element as is. There is no need to build, parse, or traverse its DOM structure!

This optimization applies every time a rendered structure has a root tag and it does not change. In the example above, the benefits seem to be minimal, but in practice this optimization triggers all the time. For example, if you look at the CoreComponents generated by Phoenix, you can see all default function components, the amount of markup they have, all wrapped in a single root tag. All of them are now skipped by the client whenever they don’t change.

We tried this optimization in LiveBeats, TodoTrek, and Livebook and we saw 5-10x improvements on full patch time, as measured by liveSocket.enableProfiling() (call that in your browser console to measure for yourself). Community members have reported gains between 3-30x!

And, once again, LiveView developers don’t have to modify a single line of code to benefit from this. We literally had to change only a single line of code on LiveView server code to make it possible. All thanks to all of the infrastructure and optimizations we built in these last 5 years.

Amazing!

Summary

This was quite a long post, but I hope it highlights and documents all the engineering work put into LiveView’s rendering stack. From a debugging point of view, you can invoke liveSocket.enableProfiling() and liveSocket.enableDebug() in your browser console to get more visibility into the optimizations we discussed today.

The combination of the Erlang VM, immutable data structures, and LiveView’s unique integration between the server and the client, yields massive benefits on latency, bandwidth, and client rendering, which put together are hard - and sometimes even impossible - to replicate elsewhere.

Personally speaking, I am really proud of this work. It leverages data-structures and compiler techniques that go beyond the developer experience and directly translates to better user experiences.

I have also enjoyed the countless hours and conversations I had with Chris McCord on these topics, alongside the great memories we built along the way (and thank you for writing all of the JavaScript, so I don’t have to!).

Give Phoenix a try to experience LiveView and all of its performance benefits. Maybe someday you will have a new optimization (without having to modify a single line of code)!

]]>

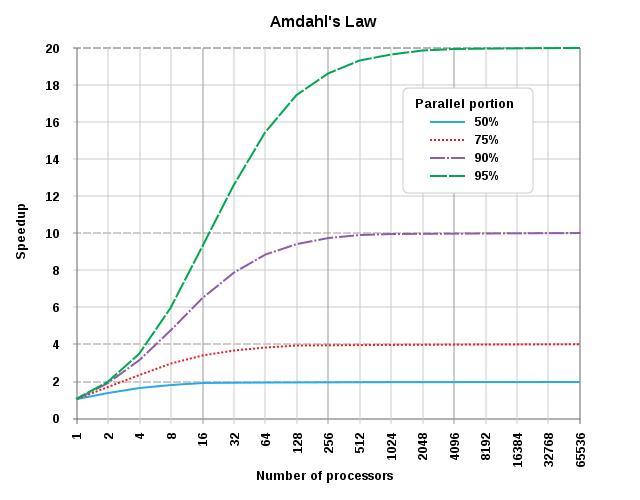

Amdahl's law applied to number of processors. [From Wikipedia, CC BY-SA 3.0.](https://en.wikipedia.org/wiki/Amdahl%27s_law#/media/File:AmdahlsLaw.svg)

Amdahl's law applied to number of processors. [From Wikipedia, CC BY-SA 3.0.](https://en.wikipedia.org/wiki/Amdahl%27s_law#/media/File:AmdahlsLaw.svg)